Why I Stopped Trusting My Health AI to Know How I Feel

- Probal DasGupta

- 14 hours ago

- 6 min read

Entrepreneur. Storyteller. Systems Thinker. | Architect of Enterprises That Think | Founder & CEO.

December 19, 2025

Recently, my smartwatch buzzed during a client meeting. "Resting heart rate elevated 8% above baseline. Recommend cardiology consultation."

I wasn't having a heart attack. I'd just had three espressos because I'd slept four hours the night before. But for the next two days, I couldn't shake this low-grade dread. What if something's actually wrong?

Welcome to health tracking in 2025, where the line between helpful monitoring and digital hypochondria has become incredibly blurry.

The Seductive Promise

Recently, my smartwatch buzzed during a client meeting. "Resting heart rate elevated 8% above baseline. Recommend cardiology consultation."

I wasn't having a heart attack. I'd just had three espressos because I'd slept four hours the night before. But for the next two days, I couldn't shake this low-grade dread. What if something's actually wrong?

Welcome to health tracking in 2025, where the line between helpful monitoring and digital hypochondria has become incredibly blurry.

The Seductive Promise

Here's what the future is supposed to look like: Your AI health agent notices your blood oxygen dips slightly during sleep. Not enough to wake you, but enough that over three weeks, it schedules you for a sleep study. Turns out you have moderate sleep apnea. Get a CPAP machine. Avoid the heart disease that kills your uncle at 54.

This actually happens. A landmark Stanford Medicine study found that wearable-AI combinations could detect atrial fibrillation months before patients sought medical care, often before they experienced any recognized symptoms.

There are even cases where these anomalies save lives. Take Nikki Gooding, a nurse practitioner whose story went viral. Her Oura ring didn’t tell her she had cancer. But it did flag that her resting heart rate was spiking and her body temperature remained elevated for weeks, metrics that didn't match her daily routine. The persistent data convinced her to investigate her vague symptoms of fatigue and night sweats. The diagnosis? Lymphoma. She credits the device with pushing her to seek answers earlier than she otherwise would have.

For people managing chronic conditions, AI agents are genuinely transformative. My colleague with Type 1 diabetes used to wake up at 3 AM to check her glucose levels. Now her AI agent predicts hypoglycaemic episodes 40 minutes in advance with 87% accuracy. It's not perfect, but it's given her the first uninterrupted sleep she's had in eight years.

But Then There's Reality

The same colleague also told me she can't go for a spontaneous run anymore without her AI agent freaking out. "Unusual exercise pattern detected outside scheduled workout window. Adjusting insulin recommendations. Hypoglycaemia risk elevated."

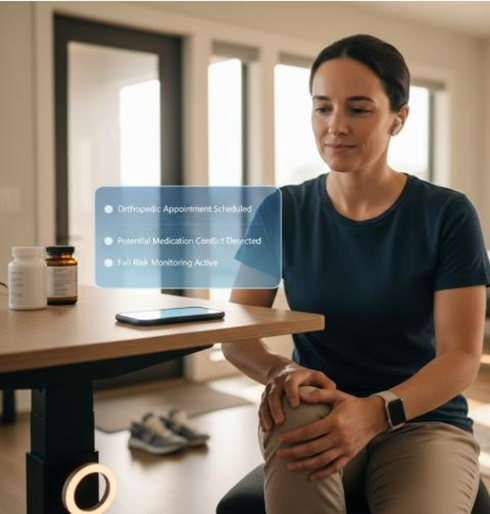

Beyond tracking: Automated agents that book your appointments and flag medication risks.She knows her body. She knows she's fine. But the algorithm doesn't know "spontaneous." It only knows "deviation from pattern." This is where things get complicated. AI health agents aren't making decisions the way doctors do. They're making decisions the way algorithms do; by finding patterns in data and flagging anomalies.

A physician colleague described it perfectly: "These systems are optimized for sensitivity, not specificity. They're designed to never miss anything serious. Which means they catch everything, serious or not."

The Decisions Your AI Will Make

Let's be concrete about what we're talking about. We aren't just talking about step counters anymore; we are talking about automated intervention. Your AI agent might:

Notice you mentioned knee pain in voice notes and automatically book an orthopaedist.

Detect you've been searching "constant fatigue," cross-reference it with your activity data, and recommend thyroid screening.

Flag that your new medication conflicts with a supplement you ordered online.

Share your medical history with emergency responders automatically if it detects a fall.

Adjust your standing desk reminders because you've been sitting 40% more than usual.

Some of this sounds great. Some of it sounds like your phone is turning into your mother.

The Problem Nobody's Talking About

Here's what keeps me up at night: Health anxiety doesn't come from major symptoms. It comes from uncertainty about minor ones. Psychologists have known this for decades. People with health anxiety aren't typically worried they have obvious heart attacks. They're worried about the weird twinge in their chest that might be a heart attack. The ambiguous stuff.

AI health agents are ambiguity generators. Every variation from your baseline becomes a potential signal. Your sleep efficiency dropped to 82%? Below optimal. Step count down 15% this week? Sedentary behaviour risk factor.

The medical term for what happens next is the "nocebo effect", when expecting negative health outcomes actually causes them. Tell people to watch for medication side effects, and they report experiencing those side effects at much higher rates, even when taking placebos.

Now imagine that scaled up. Your AI tells you your sleep is suboptimal, which makes you anxious, which makes your sleep worse, which causes the AI to escalate its concern. A Stanford psychologist called this "iatrogenic anxiety", illness caused by the diagnostic process itself. Except now the diagnostic process never stops.

The Tyranny of Optimal

But AI agents are trained on optimization objectives. They trend everything toward "ideal ranges" that may not be ideal for you, in your life, with your actual circumstances.

I talked to a man whose health AI kept recommending statins because his LDL cholesterol was 115 mg/dL. Not high. Not concerning to his doctor. Just

"suboptimal" according to population-level research. The AI flagged it every month until he eventually took the medication just to make the alerts stop.

When does preventive care become preventive harassment?

The Liability Gap

The really thorny question is about control. A friend's elderly mother has an AI agent that automatically orders refills and schedules check-ups. It's helpful; she has early dementia. But recently, the AI scheduled her for a cardiologist appointment because it detected "irregular heart rhythm patterns."

It turned out to be a sensor error from how she was wearing her smartwatch. But she spent three anxious weeks waiting for that appointment, convinced something was seriously wrong.

Who is liable here? If your AI generates so many false alarms that you start ignoring alerts, and then you miss the one real emergency; whose fault is that? We don't have answers to these questions yet, but we're deploying the technology anyway.

What Happens to Medical Judgment?

A physician friend told me something that stuck with me: "I'm starting

to doubt my own clinical judgment when the AI disagrees with me."

She's a 20-year veteran internist. But increasingly, when the AI flags something and she thinks it's fine, she orders the test anyway. This is called "automation bias", the tendency to trust automated systems even when they're wrong.

When we defer to algorithms, we may be losing something we can't easily measure or replicate: clinical wisdom.

A Different Way Forward

What if we designed health AI differently?

Add Context: Alerts shouldn't just say "heart rate elevated." They should say, "Heart rate elevated, but you just climbed three flights of stairs. No action needed."

Transparency on Uncertainty: Instead of "suboptimal sleep detected," try: "Your sleep efficiency was 82%. Normal range is 70-95%. Only 3% of people with this pattern develop clinical sleep disorders."

Selectable Sensitivity: Let users choose their mode. "Alert me only for genuine emergencies" vs. "Alert me for all deviations so I can optimize everything."

The Uncomfortable Truth

The question isn't whether AI will help manage our health. The question is whether we'll design these systems to serve human flourishing or algorithmic optimization. I still wear my smartwatch. I still check my health data. But I've learned to distinguish between the alerts that actually matter and the ones that are just algorithmic anxiety. The trick is keeping that distinction clear and not letting an algorithm convince me that every variation in my body is a problem that needs solving.

Selected Sources

New England Journal of Medicine: Large-Scale Assessment of a Smartwatch to Identify Atrial Fibrillation (Perez et al., 2019)

Annual Review of Psychology: Psychobiological Mechanisms of Placebo and Nocebo Effects (Petrie & Rief)

JAMIA: Automation bias: a systematic review (Goddard et al.)

Oura Ring & Health: The story of Nikki Gooding’s lymphoma detection

Comments